Beyond the Cloud: Native Broadcasting with ATSC 3.0

Jay Willis

Abstract – The broadcast industry is undergoing a major shift as broadcasters increasingly integrate cloud-based solutions to enhance the efficiency, reliability, and scalability of their operations. The advent of ATSC 3.0—a next-generation broadcast standard—enables the entire broadcast operations chain to become ‘cloud-native,’ opening unprecedented opportunities for flexible and resilient deployment strategies. This paper proposes a unified approach to cloud-to-terrestrial broadcasting, examining how ATSC 3.0’s capabilities can support such a fully integrated broadcast infrastructure. By analyzing current and potential implementations, we highlight the transformative potential of cloud-based broadcasting for optimizing playout, signal distribution, and emission. The goal is to provide a blueprint for broadcasters navigating the shift to a future-ready, cloud-centric operational paradigm.

Introduction

I joined ONE Media in 2019 with the expectation of deploying ATSC 3.0 across many Sinclair stations. The prospect of traveling to each site, installing equipment alongside local engineers, and providing high-level training was exciting. As we approached the deployment of ATSC 3.0 in Las Vegas in 2020, our team worked closely with vendors to ensure that the equipment and software met the new standard.

Then, COVID-19 disrupted everything. The Las Vegas deployment was originally scheduled around NAB 2020, but with travel completely shut down, we had to devise a new plan. Instead of on-site installations, the team pre-configured as much of the equipment as possible, shipped it to local stations, and remotely guided local engineers through the setup process.

Broadcast technology has continuously evolved—and continues to change rapidly. We’ve moved from FPGA-based encoding to software-based encoding, from the ATSC 1.0 standard to the enhanced ATSC 3.0 standard, and from SDI cabling to IP-based solutions like SMPTE 2110 and NDI. Now, the industry is undergoing another major shift: migration from on-premises infrastructure hardware to the cloud.

Sinclair has been at the forefront of this transition, consolidating traditional master control operations into a centralized cloud-based playout model. However, cloud migration isn’t just about reducing local station operations, it’s about enhancing flexibility, improving efficiency, strengthening IT security, and replacing aging hardware. The next step is to build on our early cloud playout work and extend the cloud’s capabilities into the ATSC 3.0 transmission chain. This will allow us to deliver the final master control output to a cloud-based ATSC 3.0 air chain and eliminate multiple encoding and re-encoding steps.

This paper presents a unified approach to cloud-based broadcasting, detailing the transition from cloud-based playout to ATSC 3.0 STLTP emission. It also serves as a reflection on the joint NAB 2024 demonstration conducted by AWS, Ateme, and Enensys, showcasing the potential of end-to-end cloud workflows in broadcasting.

ATSC 1.0 vs. ATSC 3.0

ATSC 1.0 is a fully constrained “last century” standard that offers truly little flexibility. It relies on the MPEG-2 codec and transport, standards that are now over 30 years old. The transmission’s bitrate is fixed at 19.39 Mbps. Additionally, 8-VSB modulation suffers from multipath interference, which occurs when a signal arrives at a receiver at different time intervals due to reflections, dispersion, and other terrestrial path anomalies. Unlike analog TV, where multipath interference might result in a ghosting or fuzzy picture, in ATSC 1.0, it leads to complete signal loss, preventing any decoding.

ATSC 3.0 addresses these issues by utilizing Orthogonal Frequency Division Multiplexing (OFDM), bootstrap enabled variable framing structures, and IP transport (to name a few). By incorporating OFDM along with guard intervals, the standard effectively mitigates multipath interference, transforming it from a problem into an advantage. This approach allows broadcasters to deploy multiple synchronized transmitters within a market, improving coverage and reception.

ATSC 3.0 – A Flexible, IP-Based Future

ATSC 3.0 is a revolutionary shift, providing unmatched flexibility and futureproofing for emerging codecs and technologies. Unlike its predecessor, ATSC 3.0 is entirely IP-based, meaning it uses the same packetized data structures as modern internet communications. This design enables NextGen TV services to be delivered seamlessly to televisions, set-top boxes, home gateways, and a wide variety of other connected and unconnected portable and mobile devices.

Since ATSC 3.0 is fully IP-based, it unlocks numerous new capabilities beyond traditional broadcasting. One key advantage is efficient one-to-many data delivery, which has applications far beyond television. For example, broadcasters can transmit enhanced GPS signals for precision agriculture, autonomous vehicles, and even robotic lawnmowers, offering location accuracy beyond traditional GPS capabilities. The standard also enables geo-targeted emergency alerts, allowing broadcasters to send alerts to specific users rather than an entire designated market area (DMA).

ATSC 3.0 also provides unprecedented flexibility in encoding parameters. Broadcasters can dynamically adjust bitrate and resolution based on content requirements, optimizing transmission quality in real time. Additionally, the standard supports the creation of new Broadcast Enabled Streaming TV channels (BEST) for breaking news or live events without requiring viewers to rescan their TVs.

Another significant advancement is the integration of interactive content. Think of ATSC 3.0’s A/344 standard as a ‘browser’, defining the framework for broadcasting HTML-based overlays within broadcaster applications, effectively extending web-like experiences to television screens. This feature allows for enhanced viewer engagement, such as interactive news updates, sports statistics, or personalized content recommendations.

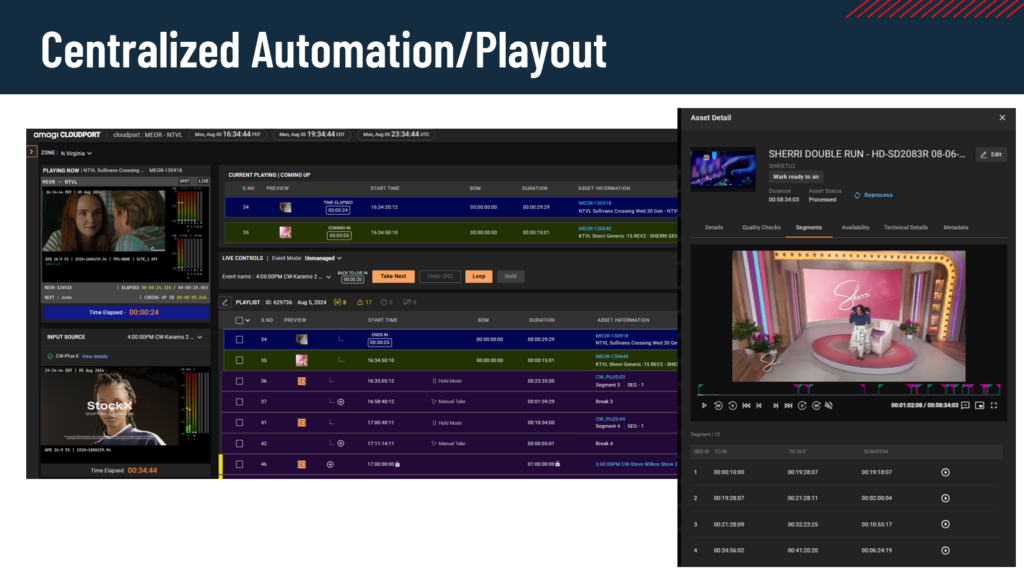

Harnessing the Cloud

The cloud is nothing new—AWS, Microsoft Azure, and Google Cloud have been around for years, and broadcast technology can now modernize itself by leveraging cloud-based infrastructures. There are significant efficiency gains with a cloud-based playout. Previously, each station had to receive a file, transcode it, and ingest it into a playout system manually. Operators then had to mark the segment in/out points before adding the content to a playlist. Now, with cloud-based workflows, we transcode the file once, segment it once for all stations, and make it instantly available for playback—eliminating redundant processing. For example, a syndicated show like Jeopardy or Wheel of Fortune, which aired across multiple markets, previously required separate processing for each station. Cloud playout solves this inefficiency.

Since we now operate from a unified cloud source, we can ensure consistent quality across all markets. We have full visibility into the transcode quality of every stream, as well as the reliability of the underlying infrastructure. Well-designed cloud environments inherently offer built-in redundancy, with hardware and data centers designed for high availability. Additionally, cloud infrastructure allows for dynamic scaling—resources can be increased or reduced as needed, optimizing costs by avoiding unnecessary CPU and memory expenses.

Bringing ATSC 3.0 to the cloud will accelerate air chain deployment. No longer will engineers need to manually install drivers on servers or ensure BIOS versions are up to date. Instead of waiting for limited engineering resources to install hardware, we can provision EC2 instances in the cloud almost instantly with Infrastructure as code (IaC).

Cloud adoption, however, presents challenges. Key concerns include how to transport video into the cloud while ensuring sufficient bandwidth and network redundancy, and training operations teams to support this modern technology. This shift requires a fundamental change in how technical teams operate. We must consider how we architect cloud-based workflows optimally, provide support, and staff for this transformation.

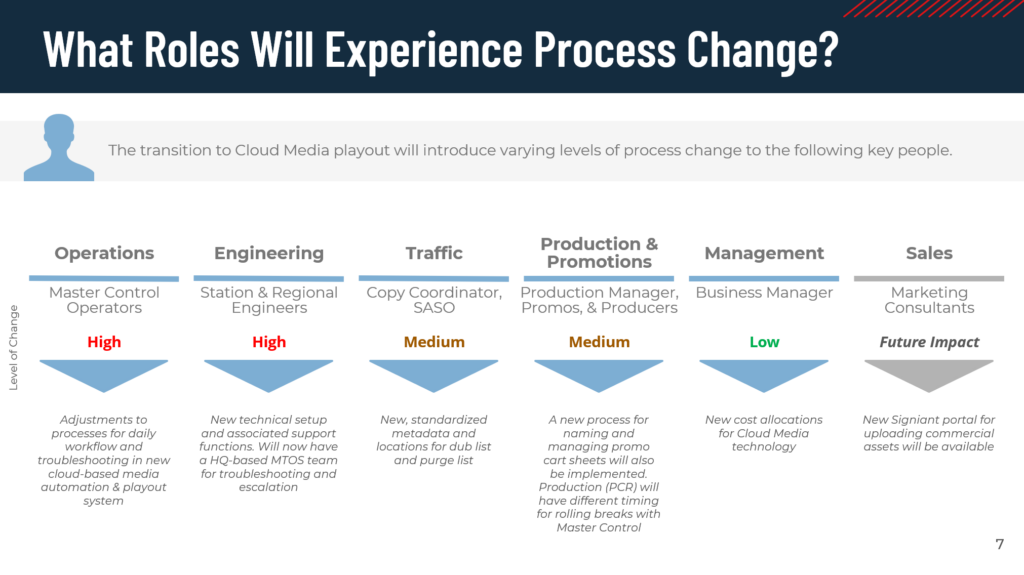

Figure 1 outlines departments impacted by cloud-based playout. As shown, there are significant shifts not only in engineering and operations but also in areas like traffic and promotions, which must adapt their processes to the new workflow.

The Playbook

Sinclair began its cloud transition in 2022, and while the process has been gradual, considerable progress has been made. As of February 2025, we have successfully migrated over 100 services.

At a basic level, cloud playout is simple—a file is placed on a playlist and played to an output. However, as we integrate master control functions, such as handling live news, network feeds, and breaking news, the complexity increases.

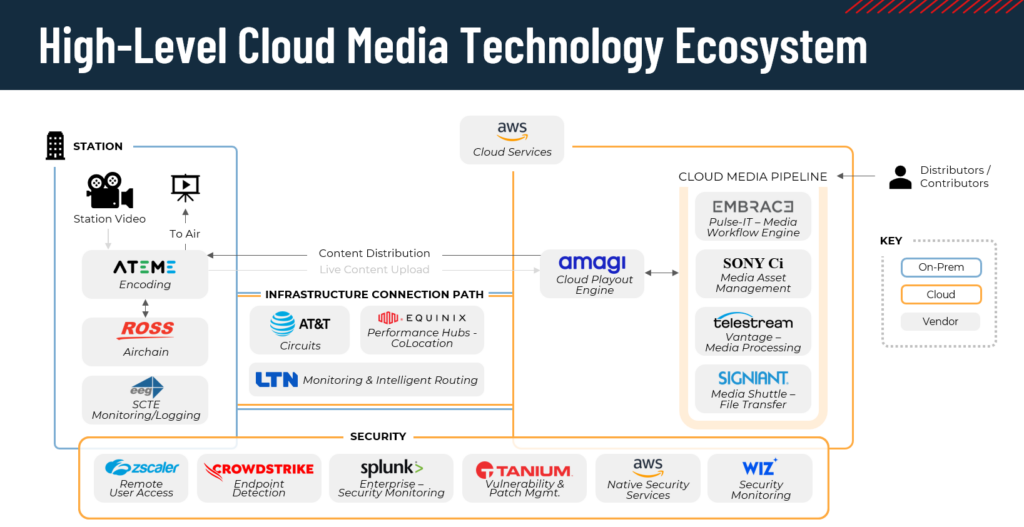

The high-level diagram in figure 2 illustrates this workflow:

On the left side, live-to-air video is transmitted from the TV station (the “ground”) to the cloud. Examples of live-to-air video include news control room feeds for the daily newscast, network feeds from each market, and local programming feeds that go directly on-air.

At most sites, we use Ateme Titan Edge to encode a transport stream, which is then sent over Zixi to AWS. This is our transport into the cloud, but any transport stream encoder will work. Transport streams are typically carried over UDP (User Datagram Protocol). UDP is susceptible to packet loss, jitter, and out-of-order delivery because it lacks built in error correction. To mitigate this we utilize protected streaming protocols such as SRT, Zixi and RIST. Each protocol improves on basic UDP transport streams by providing error recovery, retransmission, and adaptive bitrate control [2].

Once the live video arrives in AWS, the transport stream feeds the Amagi cloud playout system, which serves as our cloud-based master control. Amagi’s centralized architecture allows for significant optimization when it comes to Media asset and live ingests. It also provides a cross-region cloud redundancy where infrastructure has been spun up on AWS us-east-1 and us-west-2 regions for a full geo redundant architecture.

On the right side of the AWS workflow, we see the Cloud Media Pipeline, which handles media file imports. The majority of our stations’ playlists comprise of content delivered as a file. For example, when the Maury show is delivered as a file, we configure the linear playlist to insert ad spots after each segment.

Network affiliation determines each station’s resolution. Our ABC, Fox, CW, and MyTV affiliates broadcast in 720p, while CBS and NBC broadcast in 1080i. We transcode all media into these two resolutions to match the broadcast chain.

Cloud-Based ATSC 3.0 Air chain

The output of Amagi playout feeds directly into the ATSC 3.0 air chain—eliminating the need to send content back to a station for additional encoding. This “stay-in-the-cloud” approach improves efficiency and reduces latency.

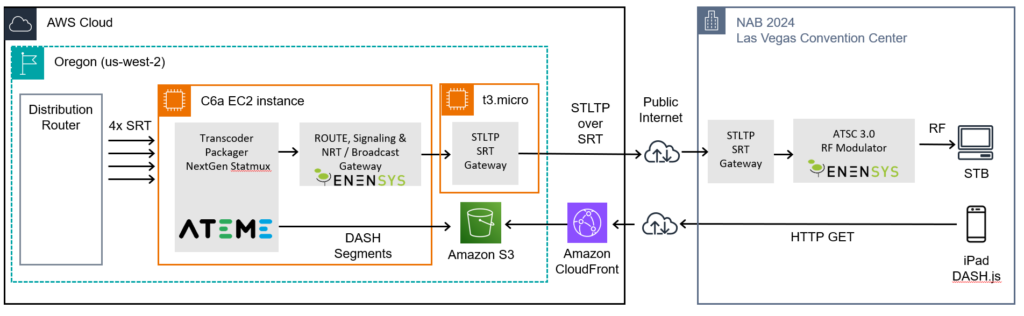

At NAB 2024, Ateme, AWS, and Enensys demonstrated the feasibility of running an ATSC 3.0 air chain entirely in the cloud. Since then, the cloud ecosystem for ATSC 3.0 has grown, with DigiCAP and other vendors now offering cloud-ready solutions.

Key ATSC 3.0 Cloud Components

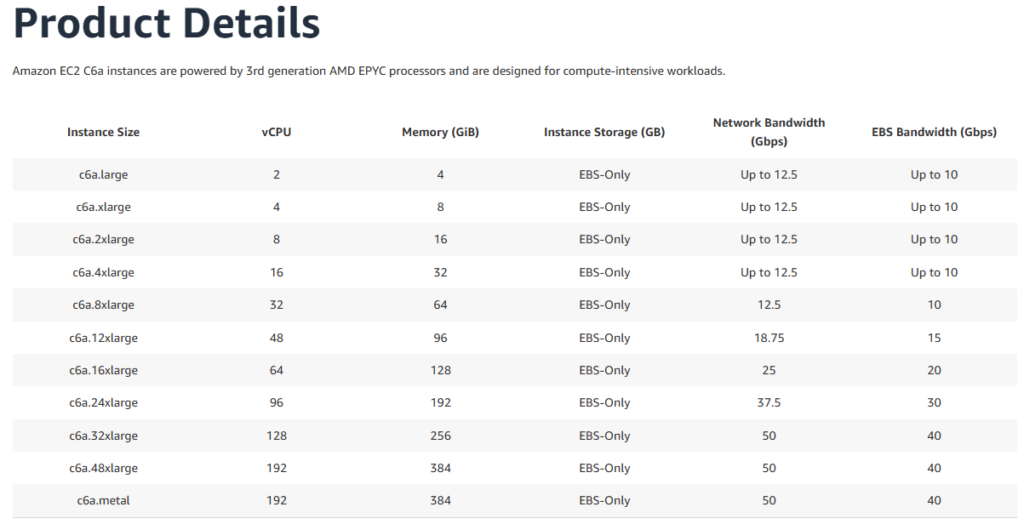

The encoder receives a transport stream from master control. The encoder produces the stream into DASH segments with AC-4 audio and IMSC1 captioning files. The file chunks then are delivered via unicast WEBDAV delivery to the Route, signaling & Broadcast Gateway. The encoder is built on a separate EC2 compute instance on AWS. C6A instances are powered by an AMD EPYC processor. Sizing can range from 2 vCPU all the way up to 192 vCPU.

The ATSC 3 Packager receives the DASH segments and assembles them into a multicast stream. The packager injects signaling data into the stream, including Low Level Signaling (LLS), Service List Table (SLT), and Service Layer Signaling (SLS) tables (ATSC 3.0’s equivalent of PSIP in ATSC 1.0). SLT Defines the service’s name, virtual channel and where the decoder can find the SLS. The SLS is a table that defines where the video, audio and CC components multicast are located. These tables provide crucial metadata that allows end-user devices to locate and decode services.

The broadcast gateway manages the physical layer parameters for transmission. Defines Physical Layer Pipes (PLPs)—e.g., PLP 0 as a robust 16-QAM layer for mobile reception, while PLP 1 carries HD services. Ensures correct sequencing of IP packets before compiling them into STLTP (Studio-to-Transmitter Link Transport Protocol).

STLTP is a UDP-based transport protocol, with FEC added to the UDP flow. To ensure reliability over public IP networking, the NAB 2024 demo encapsulated STLTP within a protected transport protocol. Various protection mechanisms are available, including RIST, SRT, and Zixi. In the demo, SRT was selected due to its bi-directional TCP handshake, which creates a reliable tunnel for UDP packet transport. On the receiving end, the SRT tunnel is unwrapped, restoring the raw UDP stream for transmission. Once the STLTP is in UDP form, it is ready to be sent to the ATSC 3 transmitter.

Hybrid Offerings – Ensuring Reliability in the Cloud

“The cloud never fails!” While this statement reflects the cloud’s resilience, failures—whether in cloud infrastructure or network connectivity—are always a possibility.

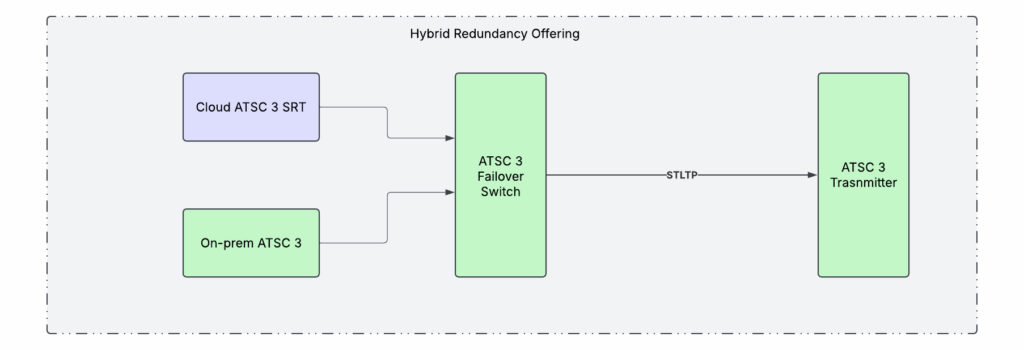

To mitigate risk, each station is equipped with a Disaster Recovery (DR) server that mirrors the cloud playout system. This server provides a baseband output on-premises, enabling the integration of a standby air chain. This hybrid approach includes an ATSC 3.0 encoder, packager, and gateway.

Both the cloud-based SRT feed and the on-prem standby air chain are routed to a redundancy failover device. This device continuously probes the STLTP feed to ensure valid payload data is present. If the primary cloud feed encounters issues, the system automatically switches to the on-prem backup.

Conclusion

In the early days of ATSC 3.0 deployment, the various technologies and implementations were largely unproven. Like any new standard, time and industry adoption have led to maturity. Today, we have seasoned encoders, packagers, and gateways that fully support the evolving ATSC 3.0 ecosystem.

As the ATSC 3.0 standard matures, so does vendor support. A few years ago, deploying an ATSC 3.0 air chain in the cloud would have been impractical—neither the technology nor vendor infrastructure were ready. Now, cloud-based broadcasting is not only viable but also strategically advantageous and large-scale implementations are underway.

Leveraging the cloud as the primary infrastructure accelerates station deployments. By eliminating the need for on-site physical server installations, complex cabling, and manual network configuration, cloud-based deployment significantly reduces the time required for station setup.

As our industry gears up to meet an anticipated sunsetting of ATSC 1.0, the agility of these approaches will become more important, and our industry will rapidly need to transition stations to ATSC 3.0. Sinclair has deployed 35 host stations and 12 hosted sites over the past four years. However, a mandated transition would require much faster, planned, and organized deployment. By leveraging the cloud, broadcasters can scale and streamline this process dramatically.

We have reached a turning point where technology has matured, and we can finally harness the cloud’s full potential in the broadcast industry.

References

[1] Hamri, Walid (2024). Transforming Master Control to the Cloud [Unpublished PowerPoint slides], Sinclair Broadcast Group

[2] AVW Group (n.d) Internet video protocols: Zixi, SRT and RIST comparison. Retrieved January 31, 2025 from https://www.avw.com.au/images/wellav/Internet_Video_Protocols.pdf

[3] Kauffman, Boris(2024). NAB 2024 ATSC 3 Demo [Unpublished PowerPoint slides], Amazon Web Services

[4] Amazon Web Services. (2025). Amazon EC2 C6a instances. AWS. https://aws.amazon.com/ec2/instance-types/c6a/