Dynamic Spectrum Allocation in 3.0

Spectrum is broadcasters most constrained and most valuable asset. The transition to ATSC 3.0 delivers dramatic efficiency gains, but many real-world deployments are still leaving capacity on the table. This post is based on Nick Hottinger’s 2025 NAB white paper Optimizing ATSC 3.0 Spectrum Utilization with Dynamic Resource Allocation and Management. We’ll take a pragmatic look at how dynamic spectrum allocation and resource management can move ATSC 3.0 from “more efficient than 1.0” to the fully optimized system the new standard enables, even under the constraints of lighthouse operations and multi-station channel sharing.

This post walks through practical approaches to maximizing spectrum efficiency in ATSC 3.0 systems, with a focus on real-world deployments, lighthouse constraints, and operational realities familiar to anyone running a broadcast plant today.

Why Spectrum Efficiency Is No Longer Optional

The days when broadcasters could afford to leave capacity unused are over. Incentive auctions permanently reduced the amount of UHF spectrum available for television, while regulatory and ownership constraints limit how broadcasters can respond structurally. Meanwhile, broadcasters are being asked to deliver more, including higher video quality, mobile-friendly services, emergency data, and non-traditional IP-based applications.

ATSC 3.0 dramatically improves the situation, but efficiency is not automatic. Many early deployments are understandably conservative, especially in lighthouse environments where multiple stations are sharing a single RF channel. In those cases, inefficiencies show up quickly as padded bitrates, unused payload, or null packets that represent lost opportunity. Getting real value out of ATSC 3.0 requires active management, not just a standards upgrade.

The Step Change From ATSC 1.0 to ATSC 3.0

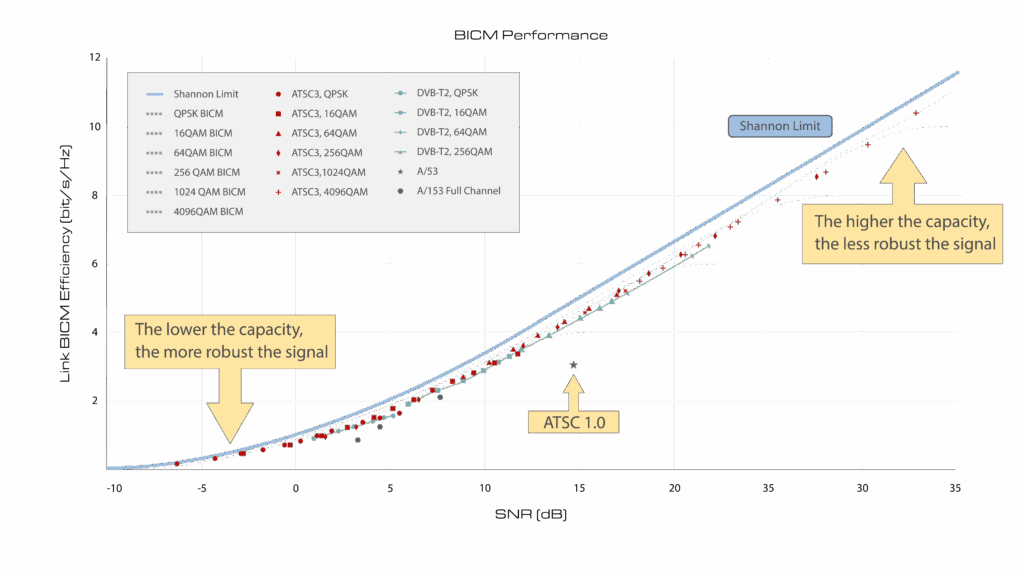

ATSC 1.0 was built around fixed assumptions. A single modulation scheme, a fixed payload of 19.39 Mbps, and MPEG-2 video defined both the ceiling and the floor of what was possible. ATSC 3.0 breaks those constraints. OFDM modulation operates much closer to the Shannon limit and performs significantly better in multipath environments. Physical Layer Pipes allow broadcasters to trade robustness for capacity on a per-service basis instead of locking the entire channel into one compromise configuration.

When combined with HEVC, the efficiency gains are substantial. A high-quality HD service that once consumed more than half of an ATSC 1.0 channel can now occupy a small fraction of an ATSC 3.0 payload with equivalent viewer experience. That efficiency is real, but it only becomes usable if the system is designed to adapt rather than remain fixed.

A Practical ATSC 3.0 Air Chain

Most ATSC 3.0 deployments today follow a fairly standard architecture. Distribution feeds are ingested by encoders, transcoded to HEVC with AC-4 audio, segmented into DASH or MPU, and packaged into ROUTE or MMTP with appropriate service signaling. A scheduler then assembles the physical layer parameters and produces an STLTP stream for the transmitter.

There is nothing exotic about this chain, and that is part of the point. Significant efficiency gains can be achieved using equipment broadcasters already deploy, provided those components are coordinated effectively.

Statistical Multiplexing as the Foundation

Statistical multiplexing is one of the most important tools for improving spectrum utilization in ATSC 3.0. Instead of treating each service as a fixed-rate stream, a statmux dynamically allocates bandwidth based on real-time content complexity. This smooths bitrate peaks, reduces the need for padding, and allows the total service pool to closely track available PLP capacity.

In practice, statmuxing can improve usable efficiency by roughly twenty percent, but its real value is architectural. By defining a single bitrate pool that precisely matches PLP capacity, null packets are minimized or eliminated. More importantly, capacity can be adjusted centrally without retuning individual services. Minimum bitrates, priorities, and service protections remain intact while the system reallocates bandwidth where it is needed most.

Orchestration Through System Management

Dynamic allocation only works if there is a control layer capable of coordinating it. Modern ATSC 3.0 systems increasingly rely on orchestration platforms that can interact with encoders, packagers, statmuxes, and schedulers as a unified system.

At a local level, a system manager controls the broadcast air chain and executes changes to channel resources. At a higher level, a broadcast core network or equivalent platform can negotiate capacity across stations, integrate with backend systems, and respond to external triggers. This architecture allows capacity changes to be automated, scheduled, or event-driven rather than manually engineered under pressure.

Physical layer changes remain the most disruptive option, since receivers may need to reacquire the signal, but even these can be managed effectively with proper timing and signaling. The key shift is that channel configuration becomes something that can change over time instead of something frozen at launch.

Supporting Short-Term, High-Value Use Cases

Many of the most compelling ATSC 3.0 applications require bandwidth only temporarily. Advanced Emergency Information is a good example. Rich media, real-time updates, and associated data can be critical during an emergency, but they are not needed continuously.

In a fully utilized channel, there is no spare capacity waiting for these events. Dynamic allocation solves this by temporarily reallocating bandwidth. A backend system detects the trigger, determines how much capacity is needed and for how long, and instructs the system manager to adjust the statmux pool or other parameters. Once the event concludes, the channel returns to its normal operating state without service interruption.

The same mechanism applies to file delivery, CDN offload, software updates, or other data-centric services. Capacity becomes something that is scheduled and valued, not something permanently reserved.

Thinking About Spectrum as an Economic Resource

Not all bits cost the same to deliver. A service carried on a highly robust PLP consumes far more spectral resources than the same bitrate delivered under less demanding reception conditions. From a planning and monetization perspective, this matters.

One useful way to think about spectrum is in terms of dollars per hertz-second. By understanding how much of a channel a service consumes and for how long, broadcasters can make informed decisions about pricing, prioritization, and tradeoffs. Dynamic allocation makes those calculations visible and actionable instead of theoretical.

Closing Thoughts

ATSC 3.0 gives broadcasters the tools to rethink how spectrum is used, but efficiency is not a default setting. It is the result of deliberate system design, centralized control, and a willingness to let the channel adapt to real-world needs. By combining statistical multiplexing, orchestration, and flexible physical layer management, broadcasters can approach full channel utilization while enabling services that were not practical in earlier standards.

For engineers, this means shifting from static channel design to dynamic resource management. For broadcasters, it means unlocking both operational resilience and new revenue opportunities. The technology is already here. The challenge now is using it to its full potential.